404 - Not found

Document not foundDaniel Slåttnes [Norway]

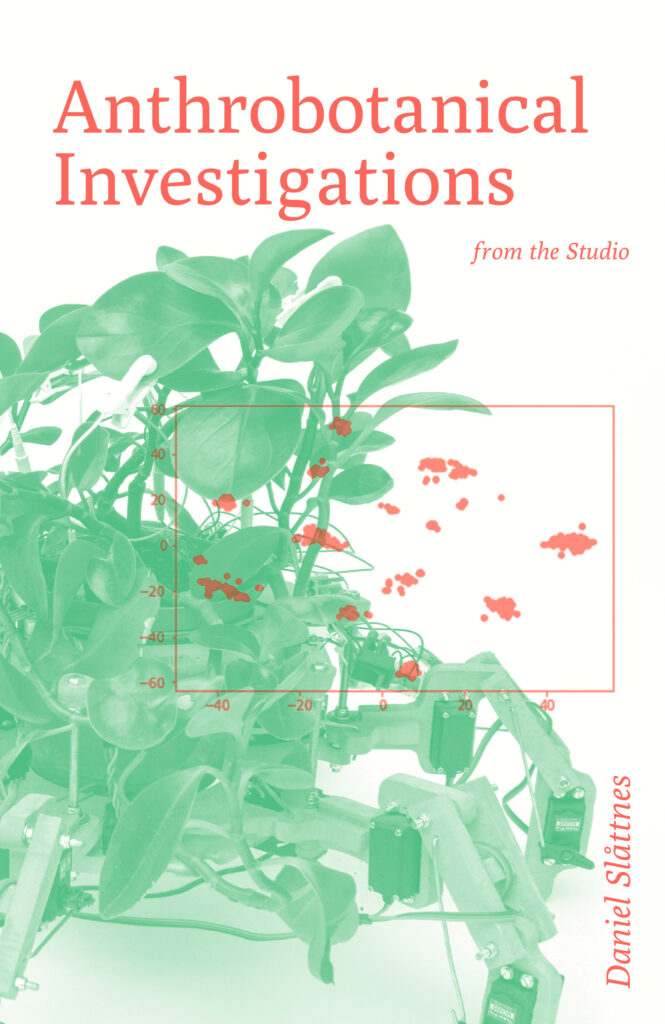

Plant Cyborgs, from the series Anthrobotanical Investigations, 2015-ongoing.

Peperomia obtusifolia (baby rubber plant), electronic and robotic components, software for tracing the plant’s biosignals and moving robotic prostheses.

“The plant is like an individual with whom I am trying to establish a relationship. What does it want? We cannot understand each other, we will never be able to share everything. But we can share our time together, our mutual relationship.” –Daniel Slåttnes, Anthrobotanical Investigations from the Studio

Daniel Slåttnes Plant Cyborgs are an ongoing interspecies collaboration. Although one might assume at first glance that these plant-machine hybrids are a byproduct of the technoscientific pursuit of control, Slåttnes applies his considerable engineering and programming skills to listen to plants, to sculpt with them, and perhaps eventually, to dance with them. To create the series of works from which the cyborgs originated, he began by meditating with the plant, sometimes for hours at a time.He then developed ways to record and amplify his own biosignals and the plant’s, mediating energy and movement into a kind of soundtrack that both parties produce in relation to one another.

Daniel Slåttnes lives and works in Oslo, Norway, and Västra Ämtervik, Sweden. Consciousness in plants, investigations into objecthood, and the shape of time are examples of topics he has been researching in recent years. He explores in several of his works possibilities to establish a kind of communication with the materials he works with. The meeting between plant and machine is a distinct focus as they are both on the outskirts of what we perceive as conscious life.

Links

Pioneering botanical bioartist George Gessert responds to Plant Cyborgs here.